Unlocking Access: The Rise and Fall of Tokeo – A Journey of Innovation and Ethical Challenges

Introduction

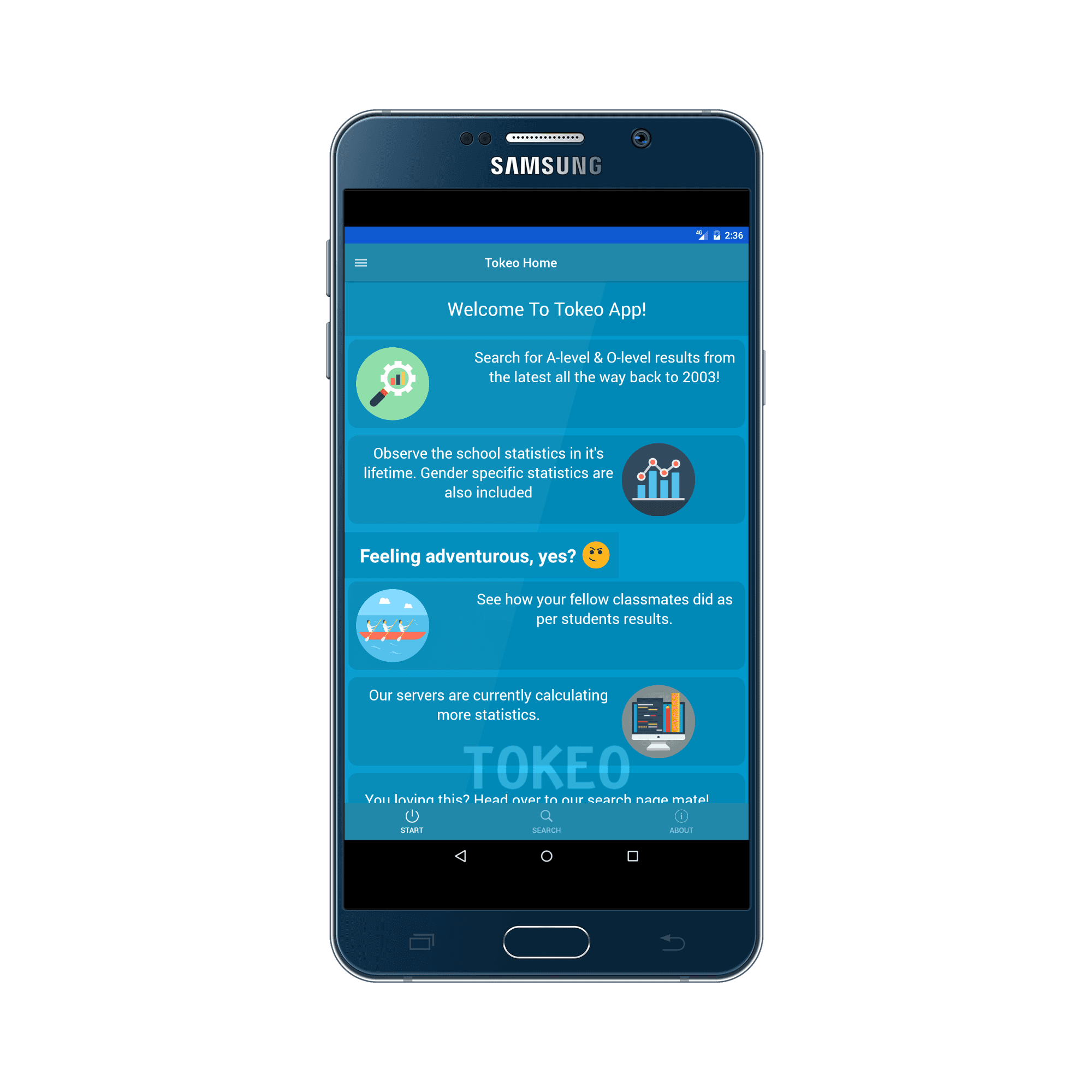

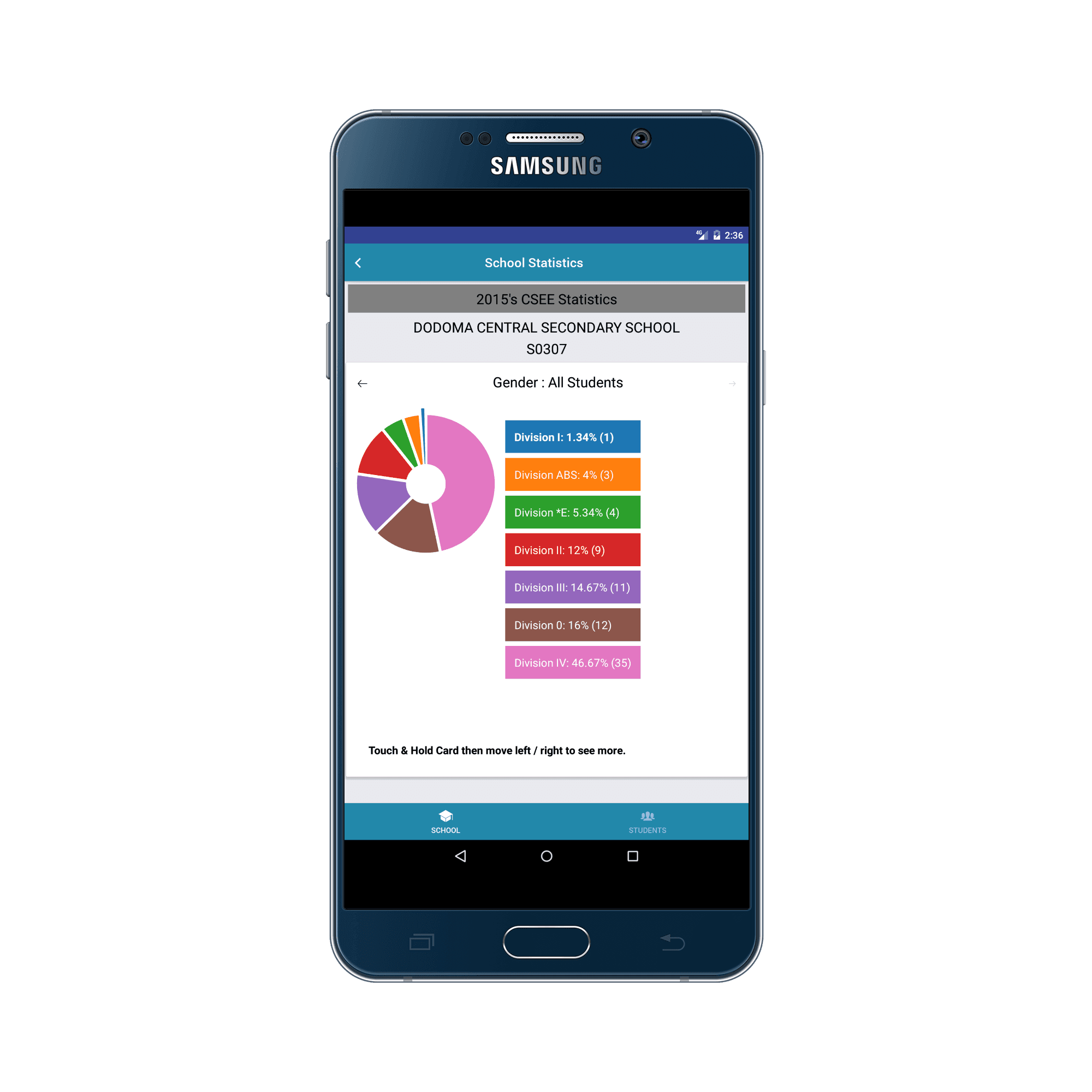

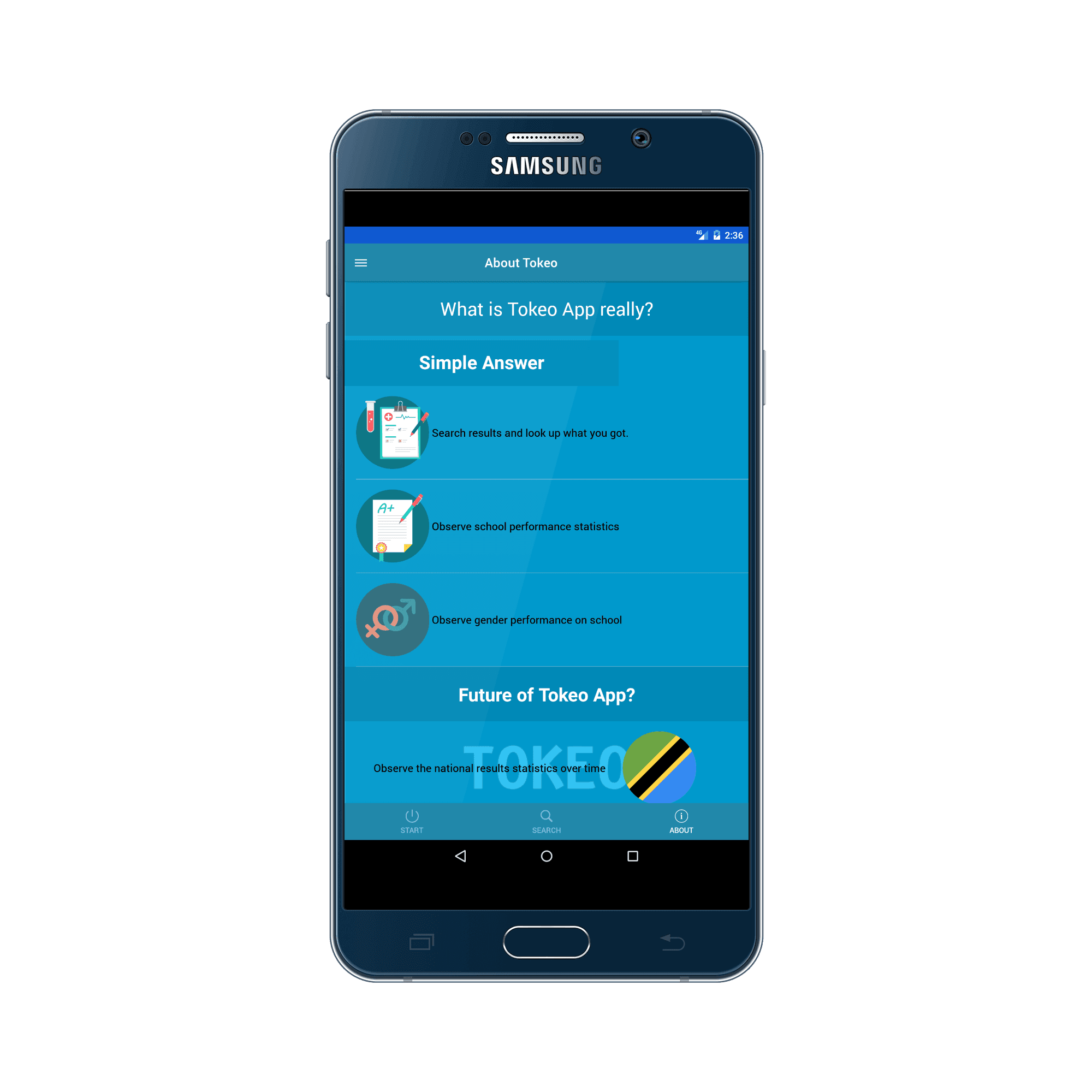

The Tokeo application emerged as a transformative solution to address the challenges faced by individuals in accessing and organizing NECTA examination results from 2003 to 2016. This innovative mobile application aimed to simplify the process of retrieving and analyzing examination data, providing users with seamless access to their academic records and school statistics.

Technologies

The journey of developing the Tokeo application was a testament to the power of innovation and problem-solving using cutting-edge technologies. The initial phase involved crawling data using JQuery and storing it in a MongoDB database using the Mongoose driver. As the project progressed, there arose a need to transform the data into a relational database model. This led to the migration of data into PostgreSQL's JSON format, followed by extensive standardization and normalization using PLPgSQL scripts to achieve 4th normal form.

The backend API of the Tokeo application was crafted using NodeJS, leveraging the capabilities of the KnexJS library for SQL query building. This comprehensive approach facilitated the seamless integration of backend functionalities with the frontend user interface, which was developed using React-Native, ensuring optimal performance and user experience.

Inspiration

The inspiration behind the Tokeo application stemmed from the inherent challenges within the existing examination result retrieval system provided by NECTA. The cumbersome process of manually searching through archives and the limited accessibility to historical data posed significant hurdles for users. Recognizing the need for a more efficient and user-friendly solution, the Tokeo project aimed to streamline the process of accessing examination results, making it accessible to a broader audience.

Impact

The impact of the Tokeo application transcended its technical functionalities, reflecting a commitment to accessibility and innovation in education. By providing a user-friendly platform for accessing examination results, Tokeo empowered individuals to navigate academic records with ease and efficiency. Despite being developed as a passion project without commercial intent, Tokeo garnered over 500 downloads on the Play Store, resonating with users who sought a simplified solution to accessing historical examination data.

The project's journey fostered growth and learning, honing skills in network-tolerant application development, functional programming, and effective problem-solving. The commitment to addressing a pressing societal need underscored the ethos of the project, embodying the spirit of innovation and community impact.

Regrettably, despite its potential to revolutionize the accessibility of examination results, the Tokeo application faced an unexpected hurdle that ultimately led to its cessation. The project encountered copyright infringement issues related to the use of raw NECTA data, a violation that contravened legal standards in the country. This unfortunate setback underscored the complexities and legal implications surrounding data usage and distribution, highlighting the need for stringent adherence to intellectual property rights and legal frameworks.